Without content, Open AI's Sora & generative AI tech are essentially useless: so shouldn't artists get paid?

Tech "unicorns" are everywhere, but where are the media unicorns?

Wake up! It’s time for your Monday morning AI media and entertainment brAIn dump! Today’s “mAIn event” (feature story) was spurred by continuously reading headlines of new privately-held generative AI tech “startups” achieving “unicorn” status (meaning they’re valued at over $1 billion). Made me wonder, where are the media unicorns? Content, after all, is THE necessary ingredient for generative AI tech to even function and have any utility. My feature may put things in a new light.

Next, you’ll watch “the trAIler” - 10 key media-related AI headlines from last week (the “AI:10”). Then, you deserve “the cocktAIl” - my AI mixology that includes a link to register for this Wednesday, March 27th’s, virtual AI event featuring Stewart Copeland of legendary band The Police (trust me, you’ll want to see it). Finally, “the AI legal case tracker” - updates on the key generative AI-focused copyright infringement cases.

I. the mAIn event - without content, “Sora” and generative AI tech are essentially useless: so shouldn’t artists get paid? (tech “unicorns” are everywhere, but where are the media unicorns?)

ChatGPT creator OpenAI and rivals Anthropic, Cohere and Perplexity -- what do all of these generative AI tech “startups” have in common? All are privately held companies valued (or soon to be valued) in excess of $1 billion, making them what is called “unicorns” in the lexicon of Silicon Valley. But here’s another thing they all have in common. They built potentially transformational technology that is essentially useless without the content on which it is “trained” to deliver its results. And they get that requisite content by scraping the vast Internet, sucking up millions of copyrighted works in the process – all without consent.

These generative AI leaders – and other leading genAI tech innovators well on their way to unicorn status (like music-focused Suno and video generator Haiper) – defend their relentless non-consensual “training” practice as answering to the higher calling of social progress. So they respond to any qualms about copyright infringement by claiming fair use as a defense.

But is their training – and what it represents — really “fair” when Silicon Valley continues to rack up billions based on its dependence on massive amounts of copyrighted works, while the artists and media and entertainment companies responsible for creating those works continue to face financial uncertainty and be pummeled by Wall Street?

Where are the media and entertainment unicorns that fuel this relentless march in the name of tech progress?

This fundamental issue of generative AI-based copyright infringement is playing out in the courts right now, and so far Silicon Valley is winning. The federal courts that have addressed the issue have essentially concluded that there can be no actionable infringement when an artist’s copyrighted works are included in a training data set of millions. The rationale seems to be that generative AI’s end result – its output – will not be substantially similar to the allegedly infringed work and, therefore, cannot cause direct harm to its creator. It’s kind of an “only one screw in a machine with thousands of parts” rationale that, upon first blush, sounds kind of logical. If that machine spits out plastic fasteners, they look nothing like that screw.

But hey, wait a minute! Doesn’t the machine’s manufacturer need to pay for that screw no matter what that machine spits out? Of course it does! The screw is an “ingredient” in the overall manufacturing process. It creates value. And — here’s the kicker — it also took value (resources) to make.

so is big tech’s “fair use” refrain really fair?

With this context in mind, let’s zoom back out to the macro issues at play here. Isn’t Big Tech’s “fair use” refrain just one big justification for another massive transfer of wealth from artists and creators to big tech companies like Apple, Google, TikTok and Meta that have built their multi-billion and trillion dollar valuations on the backs of content creators for decades?

Let’s take hot new generative AI music startup Suno, which just launched its jaw dropping latest version last week. Just by typing in a few words as a prompt – for example, create “a sad folk song asking why there aren't any media unicorns, because tech unicorns are everywhere” -- Suno generates professional sounding music tracks in seconds, complete with song title, lyric sheet, and thumbnail artwork. You can listen to my prompted song, which Suno automatically titled “The Song of the Unseen Unicorn,” here. It’s scary good, and its lyrics are scarily apt.

How does Suno’s AI music producer do that? I certainly don’t know its tech special sauce. But I know (at least I think I know) that its real secret sauce is the wide world of copyrighted songs and recordings that it apparently sucks into its training vortex. From what I can see (and I’ve looked hard), the music it uses for training hasn’t been licensed, despite the company’s recent “Trust & Safety” message that rather obtusely states that it doesn’t “recognize references to other artists” because they “are not here to make more Fake Drakes.” I’m quite sure that only means that Suno generated tracks will not replicate the voice of any specific artist. It doesn’t mean that Suno doesn’t include hordes of copyrighted works in its training data set. I reached out several times to Suno to comment and confirm (or challenge) this, but never heard back.

is it one big “heist”?

If my understanding is correct (and I’m quite sure it is), isn’t this essentially, in the provocative words of one music writer, just one big heist? If all of us can simply create high quality music in seconds with just a few keystrokes, then won’t there inevitably be less room for human created tracks? Spotify and other music streamers are already being overrun by AI generated tracks, so how can it be argued that there won’t be at least some meaningful market substitution for songs by the human artists on which the generative AI received its music training?

The simple answer is that it can’t! Some meaningful fraction of market demand for human-generated music will be lost to our new artificial composers.

Some will argue that this is nothing new, because artists have always been inspired by and “copied” the art of others – the only difference here being that we are substituting machines for humans. But sorry, no – this isn’t the same. Wholesale vacuuming of entire copyrighted songs and recordings is fundamentally different than artists building on top of the creative building blocks of others before them. Individual artists aren’t creating entire systems that are specifically designed to enable mass market commercialization. And oh yes, let’s not forget that copyright law is there to rope in individual humans if their “inspiration” crosses the copyright line.

Many others will point out – correctly this time — that new technology has disrupted the media and entertainment industry from the beginnings of time, decimating some jobs while creating entirely new categories of jobs. And it’s true, it is a tale as old as time. Synthesizers enabled music pioneer Gary Numan to create an entirely new musical genre, for example – and he initially received significant blowback for destroying demand for session musicians (watch and listen to my interview with Numan here).

But Numan was no tech behemoth. He didn’t create the tech, he just used it.

the lessons of YouTube

A more direct comparison is YouTube. YouTube was, from its beginning, a revolutionary new (and very cool) technology. But it was nothing without the content that passed through it. Originally, that content was expected to be user generated content, enabling anyone to broadcast themselves (“broadcast yourself” was its original tagline, in fact). That meant, of course, that users gave their consent when they uploaded their videos. But it was only when users began to upload professionally produced content — much of which was copyrighted and not their own — that YouTube really broke out. Remember that SNL skit “Lazy Sunday?” That was a critical inflection point.

At first, YouTube looked the other way at this reality and built a massive customer base in record time. Silicon Valley bros Chad Hurley and Steve Chen took that base and the value created — much of which was built on top of those unlicensed copyrighted works — and sold it to Google for $1.65 billion (yes, YouTube was one of the original unicorns). Ultimately, the glaringly obvious fact that creators were receiving no compensation for the massive value created by their content led to major infringement litigation that culminated in today’s Content ID system that pays creators for any unlicensed use of their works.

For similar reasons, isn’t it glaringly obvious that creators should be compensated for the fundamental foundational value they bring to otherwise essentially useless generative AI tech? The essential ingredient that fuels genAI tools is content.

artistic independence and just compensation

This isn’t some anti-tech rant. It’s meant to be a simple declaration of artistic independence to be able to choose to participate fairly in a new generative AI world that builds its value on the backs of their works. Artist “opt in” systems from the likes of generative AI companies Lore Machine (the story visualization platform I featured in last week’s newsletter) and Rightsify (which says its Hydra music generator trains only on a library of music it owns) point the way.

Given this essential core value creators bring to Silicon Valley, shouldn’t there be some media and entertainment unicorns too? There seems no reason why privately held media startups or content franchise makers can’t be worthy of that badge of honor given this reality. After all, it’s the content that is transcendent and feeds the soul – the necessary “ying” to technology’s “yang.”

Think about it this way. Not even the great Bruce Springsteen — one of the most successful and prolific human music generators of all time — comes close to unicorn status. He sold his massive catalog of music, including all of his songs and recordings, for $550 million a few years ago. To us, he’s The Boss! His music will inspire lives for generations to come. But in the eyes of Silicon Valley, he’s just another wannabe unicorn startup (and is only half-way there).

Maybe Bruce would sympathize with these lyrics from the chorus in my Suno-generated song, “The Song of the Unseen Unicorn”:

“Oh, where are the media unicorns? (where are they now?),

Lost in a world that doesn’t see their crown,

In a society of screens, clicks and endless streams,

The media unicorns, unseen, it seems.”

(What do you think? Send me your feedback and reach out to me at peter@creativemedia.biz.)

II. the trAIler - 10 “quick hit” AI headlines from last week

(1) Elvis shakes his hips again! Tennessee now has the nation’s first law that, according to the Human Artistry Campaign, “establishes strong protections for everyone’s unique voice and likeness against unauthorized artificial intelligence deepfakes and voice clones.” The law is known as the ELVIS Act - as in “Ensuring Likeness Voice and Image Security.” Pretty clever, huh? This may be one of the first new Tennessee laws that I can actually stand behind!

(2) Google 250 Million, France 1! Europe moves first again - this time French regulators fined Google 250 million Euros for training its AI-powered chatbot Gemini on content from publishers and news agencies without consent and compensation. Bravo France, bravo! (my feature story below lays out why I feel that way.)

(3) But Apple gives its big tech bro Google a solid. Wasn’t all bad news for Google though. In a story that surprised many, Apple reportedly is in advanced talks to bring Google’s Gemini AI to its iPhones and other products. Not inked yet. But my Magic 8-ball says it will happen. Read more here.

(4) Is OpenAI CEO Sam Altman pulling a Steve Jobs? After shocking many in Hollywood when he unveiled his mind-boggling text-to-cinematic quality video generator “Sora,” Altman is now courting Hollywood to embrace it. Seems a lot like two decades ago when Jobs presented himself as the savior to the music industry with Apple iTunes. Read more here.

(5) Meanwhile, Google alums steal a scene from OpenAI’s “Sora” Hollywood story. Google Deep Mind AI alums just released their own video generation tool “Haiper.” Why “Haiper”? Who knows. But not sure it will make Hollywood “happier” (cue laugh tracks). Read more here. And check this out - I tried Haiper, using this prompt: “create a video of two small dogs fighting for a bone in a backyard on a dark drizzly day.” And watch the resulting video here (note: Haiper is limited to generating 2 second high resolution videos right now, but still remarkable).

(6) ChatGPT “actually kind of sucks.” Not my words. They’re OpenAI CEO Sam Altman’s! So what does he intend to do about it? Well, release ChatGPT’s next version of course. He recently hinted that a new more powerful version 5 may be coming soon - and will be “materially better” (which, in tech talk, means massively better). Are we ready for it? Read more here.

(7) Sam likely also has unkind words for OpenAI’s GPT App Store. It’s apparently off to a surprisingly slow start. Could it be that generative AI is over-hyped? Nah! (almost had you though!). Read more here.

(8) Perplexity perplexes Google and uses Google’s own data to do it! I’ve mentioned generative AI search tool Perplexity before (if you haven’t tried it, you should). This Jeff Bezos backed “startup” is impressive — a real alternative to Google search. Its punchline? It uses Google’s data to do it — and soon is expected to have official “unicorn” status ($1 billion valuation). Read more here.

(9) Will the real human journalist please stand up to collect your prize? Five of this year’s 45 finalists for the coveted Pulitzer Prize for journalism reportedly used AI in their relevant works. Read all about it here.

(10) Through it all, Nvidia and CEO Jensen Huang rocked on! The company held its developers’ conference, black leather jacketed Huang announced massive new chip processing power, and investors continued to gobble up its stock (which steadily rose through the week … again).

III. the cocktAIl- your AI mix of “must attend” AI events

After all, it’s always happy hour somewhere!

(1) Register for TODAY’S MARCH 27th “AI & Music” virtual roundtable where I interview Stewart Copeland of THE POLICE and Alex Ebert of EDWARD SHARPE!

My first Digital Hollywood virtual roundtable is TODAY, MARCH 27th, at 11 am Pacific. The topic is generative AI and music - I interview multi-Grammy winning artist Stewart Copeland (drummer of legendary band The Police) and Alex Ebert (multi-Platinum recording artist of Edward Sharpe and Golden Globe winning composer) about how they feel about generative AI and how it will impact their art and their livelihoods.

(2) On April 4th, I’ll be moderating a high power panel about AI and gaming with leading experts in those worlds. It’s the “Gaming Brunch” hosted by ThinkLA and sponsored by Activision Blizzard — and it takes place at LA’s Skirball Center. Check out the information here and register for the event.

(3) AI LA’s “A.I. on the Lot” on May 16th, 2024 is a “must attend” event. Check it out and sign up here. I attended last year. It’s where all the LA-based media and generative AI “movers and shakers” meet, learn, and collaborate. You gotta be there.

(4) Check out Digital Hollywood’s first generative AI-focused virtual summit, “The Digital Hollywood AI Summer Summit,” on four days in July (July 22nd - 25th). The sessions are outstanding. Learn more here via this link.

Reach out to me at peter@creativemedia.biz with your feedback & submissions. I may feature them.

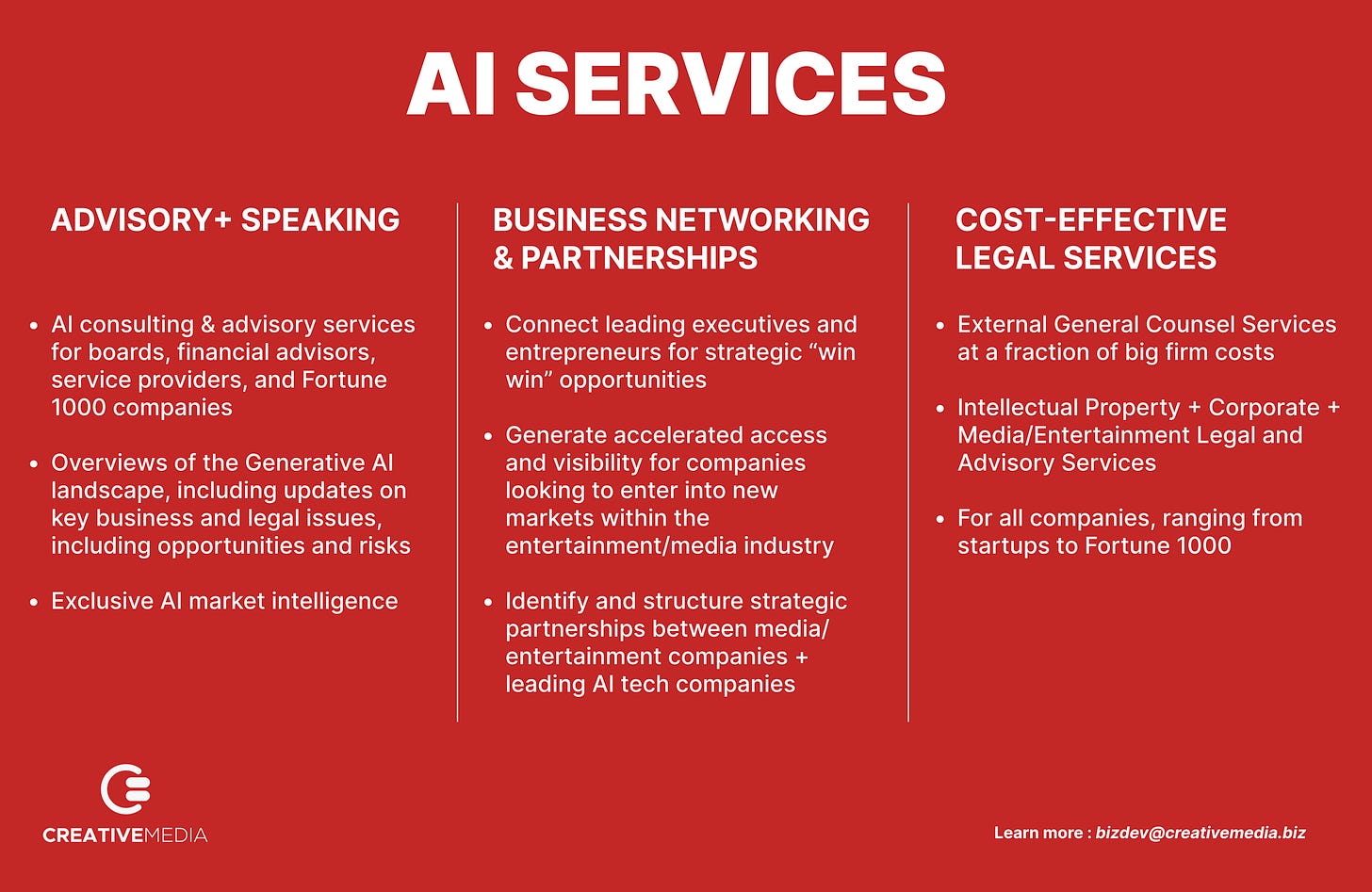

check out Creative Media and our AI-focused services

IV. the AI legal case tracker - updates on key AI litigation

Rather than lay out the facts of each case - and the latest developments - here in every newsletter, click on this “AI case tracker” tab on “the brAIn” website. You’ll get all the up-to-date information you need. These are the cases I track.

(1) The New York Times v. Microsoft & OpenAI

(2) Sarah Silverman, et al. v. Meta

(3) Sarah Silverman v. OpenAI

(4) Universal Music Group, et al. v. Anthropic

(5) Getty Images v. Stability AI and Midjourney

Great post Peter. The YouTube analogy is apt. But remember that Viacom owned the MTV Generation and could have partnered with YouTube early, instead of engaging in a decade of litigation. Yes, it resulted in ContentID, but MTV is irrelevant to my kids today. In retrospect, the better approach for Viacom would have been to cooperate early with YouTube and maintain creative relevance. There is a lesson in that for us today with AI. There is an opportunity to engage constructively with AI companies and find ways to license copyrighted works, and for creators to be appropriately compensated (alongside a reasonable threat of litigation).