"Ethically sourced AI”: even YouTube is a believer this time (well, sort of ...)

It’s time for AI training transparency and labeling (not to mention payment to creators)

Here’s your Monday morning brAIn dump! First, “the mAIn event” (my feature story). Next, “the cocktAIl” - my special AI event mixology. Finally, “the AI legal case tracker” - updates on the key generative AI copyright infringement cases. So let’s get right into it!

I. the mAIn event (feature story): “Ethically sourced AI” (and the real meaning of training on “publicly available” works)

OpenAI’s unveiling of its cinematic quality AI video generator “Sora” – and the power of what it represents -- shook Hollywood a few weeks ago. Its shocking quality certainly called the question of what it means for Hollywood production in the future. But Sora also, once again, puts the spotlight on the fundamental issue of AI “training” on copyrighted works without creator consent.

Of course, when asked, OpenAI – like most generative AI companies – never comes right out and says that’s what it does. The company simply says that it trains Sora on “publicly available” works. While that sounds innocuous enough, it really isn’t. If it were, why would the company be so cagey about it? CTO Mira Murati, when directly asked whether Sora trained on YouTube videos, deflected. “I’m actually not sure about that,” she said – and she’s the CTO!

Well, we’re now certain -- and surprise, surprise, it’s what we’ve suspected all along. “Publicly available” means simply that the food OpenAI uses for training its AI – because that’s what it is to its voracious AI pet – is content accessible online, much of which is copyrighted of course. Thanks to some intrepid journalistic digging by The New York Times, we now know that OpenAI trained its ChatGPT LLM on (among other things) over one million hours of YouTube videos, all without payment or consent. And here’s a big “tell” about how OpenAI itself really feels about what it’s doing. The name for its internal program that takes YouTube videos and transcribes them into text for training purposes is “Whisper,” as in, let’s keep things on the down low. I’m no linguist, but it certainly seems like an admission of some sorts to me!

Apparently, even YouTube – the copyright infringing OG – agrees. YouTube doesn’t precisely couch the issue in those terms, of course, perhaps because it’s reportedly doing the same thing itself. But just a few days ago, CEO Neal Mohan bemoaned the fact that Sora’s non-consensual training on millions of its videos violates its terms of service. That’s a rich claim coming from YouTube, since YouTube built its initial base by enabling users to upload any videos they wanted – including copyrighted videos like SNL’s notorious “Lazy Sunday” that blew the lid off – without license or compensation of any kind. One could say that the U.S. copyright laws and notices were the relevant “terms of service” at the time.

Given that inconvenient truth, some would say YouTube’s position sounds a bit, shall we say, hypocritical. But putting that aside for the moment, Mohan has a point. Why should OpenAI – or any other LLM – be able to feed off the works of others in order to build its value as a tool (or whatever you call generative AI)? And even more pointedly, where are the creators in this equation?

Apart from trying to dodge those specific questions, OpenAI, predictably, tries to turn the table on Google and contend that what it does is effectively no different than what Google itself did when it vacuumed up the entire Internet world – millions of copyrighted works – for its “books” project in order to make them searchable online. At the time, the 9th Circuit Court of Appeals blessed Google’s actions as being a “fair use,” in a seminal case that is always cited by those in tech who feel that creative works should be considered fodder for some kind of higher calling of infinite progress.

But Google showcased only snippets of those books in its search results – not the whole enchilada. There was no market substitution there. Once a user found the copyrighted work through search, they still would need to actually go out and buy the real thing. That’s a fundamentally different proposition than Sora’s. Sora doesn’t call attention to other copyrighted works, building new channels of monetization for them. Sora, instead, competes directly with them (at least it will when it becomes widely available).

Anyway, if OpenAI were so confident in the righteousness of its position, why be so cagey about it? Because it isn’t confident (it’s embroiled in multiple litigations on the subject, several of which I track in the AI case tracker in this newsletter). As I wrote a few weeks ago, generative AI tech without content is essentially useless. We know that, and they know that. That means artists and creators of those creative works should be compensated. You can’t re-use my article here without my permission simply because it’s been posted. And that basic fact doesn’t change simply because you’ve sucked in millions of works into your training vortex. It’s not just about the outputs generated by AI (that’s a separate copyright matter). It’s about the inputs as well (at least, it should be).

At a minimum, it’s hard to argue that OpenAI’s opacity about what’s really going on should be confronted head on. All of us (creators and consumers alike), for a whole host of reasons, deserve to know precisely what OpenAI uses in its training data sets. That kind of transparency is precisely what President Joe Biden’s Executive Order about AI calls for. Congress finally took Biden’s hint when just a few days ago Adam Schiff introduced “The Generative AI Copyright Disclosure Act.” Following the European Union’s own historic legislation on the subject, Schiff’s Act would require anyone that uses a data set for AI training to send the U.S. Copyright Office a notice that includes “a sufficiently detailed summary of any copyrighted works used.”

Essentially, this is a call for “ethically sourced AI” and transparency so that consumers can make their own choices. Think of it like nutritional labeling on food products for consumer safety reasons. “Trust and safety” logically should apply here too, and artists certainly agree. Last week leading musicians like Billie Eilish penned an open letter to the tech community to knock it off and stop training their LLMs without consent or compensation. So the heat is most definitely on, and it’s up to the creative community to keep the issue on the front burner.

So let’s first pull the curtain on what’s really going on in the AI sausage factory via demands for transparency. Then we can all directly confront the copyright legal issues head on with reality we all understand. To infringe, or not to infringe (because it’s fair use)? THAT is the question – and it’s a question winding through the federal courts right now that will ultimately find its way to the U.S. Supreme Court. And when it does, my prediction is that ultimately even this wacky court will find a way to protect artists in the most basic of ways. Following precedent that even it might just respect (because this Court issued it in the Andy Warhol case), it will find direct harm to creators for non-compensated, non-consensual training – essentially market substitution -- which is a new kind of exception it added to relevant infringement “fair use” analyses.

Simply because something is “publicly available” doesn’t mean that you can take it. It’s both morally and legally wrong (I’m an IP lawyer and welcome a healthy debate on that subject). And for god’s sake, be transparent about what you’re doing!

What do you think? Send me your feedback and thoughts at peter@creativemedia.biz.

II. the cocktAIl- your AI mix of “must attend” AI events

After all, it’s always happy hour somewhere!

(1) AI LA’s big “A.I. on the Lot” event is only one month away on May 16th, so register now! I’ll make it really easy for you by giving you a 20% discount. Just use promo code “PETER” when you check out. Check it out and sign up here. This is one of those few “must attend” events. I’ll be there. You should be too.

(2) My next Digital Hollywood virtual AI roundtable event is April 25th, 12 noon Pacific. It’s all about generative AI and Video this time — and how OpenAI’s “Sora” and other AI video generators will transform both Hollywood and Madison Avenue advertising. Last time I interviewed The Police’s Stewart Copeland about AI and music. This month’s features a great “show and tell” — both a live demo of one of the leading generative AI video tools and leading media/marketing/tech execs. It’s absolutely free. And absolutely fascinating … and important. You won’t want to miss it. Register here via this link.

(3) Check out Digital Hollywood’s first generative AI-focused virtual summit, “The Digital Hollywood AI Summer Summit,” on four days in July (July 22nd - 25th). The sessions are outstanding. Learn more here via this link.

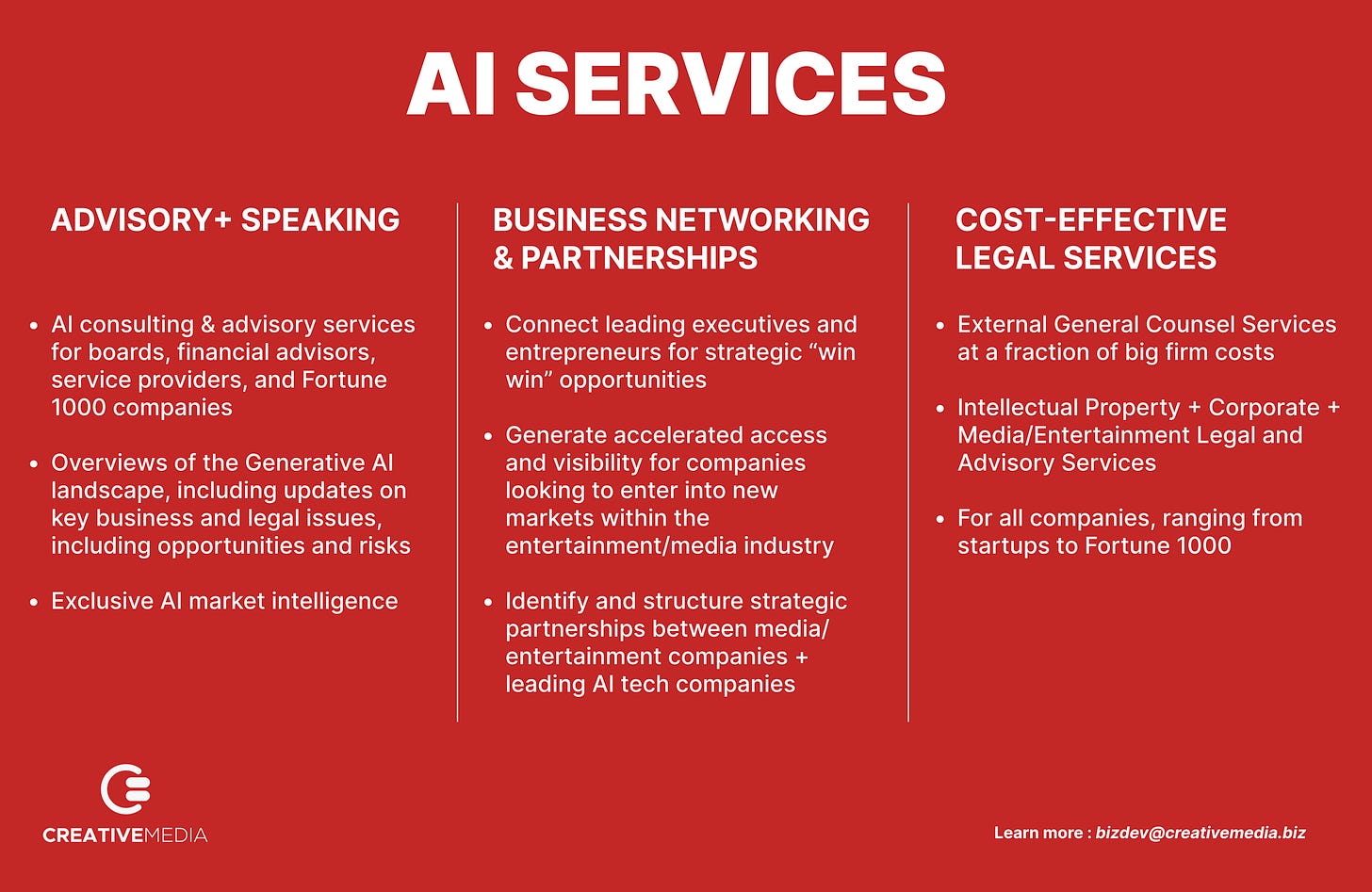

check out Creative Media and our AI-focused services

III. the AI legal case tracker - updates on key AI litigation

Rather than lay out the facts of each case - and the latest developments - in every newsletter, click on this “AI case tracker” tab on “the brAIn” website. You’ll get all the up-to-date information you need. These are the cases I track (there were many important developments this past week).

(1) The New York Times v. Microsoft & OpenAI

(2) Sarah Silverman, et al. v. Meta

(3) Sarah Silverman v. OpenAI

(4) Universal Music Group, et al. v. Anthropic

(5) Getty Images v. Stability AI and Midjourney